Lastly, we have our final layer which is the output layer to give results. Other activation functions that are widely used and accepted are Tanh and softmax.Ībove is the structure followed by Neural Networks, firstly we have an input layer which includes a dataset (either labeled or unlabelled) then there are hidden layers, we can use as many hidden layers as we want as all it does is the extraction of informative features from the dataset, we must choose our number of hidden layers wisely as too many features can lead to overfitting which may disturb the accuracy of our model to some extent. Generally, the sigmoid function or softmax is seen to be preferred by data scientists and machine learning engineers. Here, the activation function decides which feature or information to fire forward towards output in order to minimize error. Input -> matrix activation -> activation -> output Further in the structure ∑ shows the activation function which acts as a decision-maker and allows only certain user information to fire forward further in the network towards the output layer.

Input x1, x2, x3 which contains information in association with weights w1, w2, w3 acts as input layer and is stored in a matrice form known as hidden layers. Neurons in brains have three most important features -ĭendrites: that gives input to the nucleus of the neuron.

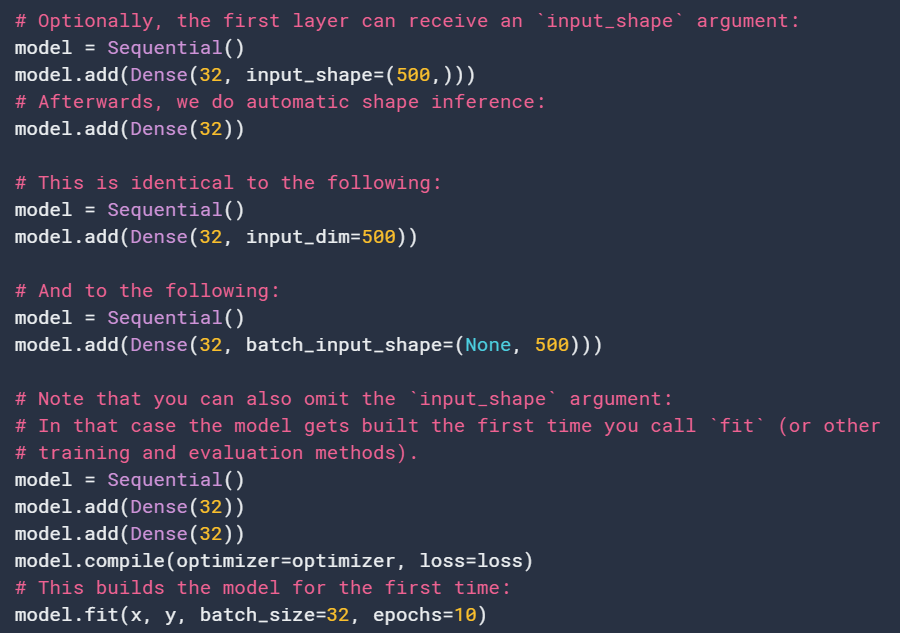

Let’s see the basic structure of neurons working in the human brain. Neural Network Implementation Using Keras Functional API So, how does the human brain neurons work? And how this structure helped neural networks and deep learning? Let’s discuss this all. Neural networks are said so because it is inspired by the working of the human brain’s neurons. The main idea behind machine learning is to provide human brain-like abilities to our machine, and therefore neural network is like a boon to this ideology.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed